TL;DR:

- Data analysis has transformed UK criminal investigations, enabling precise hotspot identification and crime linking. However, successful implementation relies on high-quality data, effective governance, and maintaining human investigative judgment alongside technological tools. Support from digital forensics experts ensures evidence is court-ready, while balanced oversight prevents over-reliance on AI.

Data analysis has fundamentally altered what is possible in British criminal investigations, and the results are no longer theoretical. West Midlands Police’s Project Guardian demonstrated this directly, achieving a 16% reduction in knife crime by layering geospatial and temporal data to pinpoint violent crime hotspots with surgical precision. For law enforcement officials and legal professionals operating in the UK, understanding exactly how these methods work, and where their limits lie, is now essential professional knowledge rather than optional background reading.

Table of Contents

- Understanding modern data analysis in UK law enforcement

- Real-world impact: How data analysis advances criminal investigations

- Extracting actionable intelligence: From predictive modelling to digital forensics

- Challenges, risks, and governance in the age of Police.AI

- Why data-driven policing succeeds or fails in the real world

- Bridging data analysis and evidence: Support for your investigations

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Modern policing revolution | Data analysis and forensic science are transforming how UK law enforcement investigates and solves crime. |

| Tangible results | Projects like geospatial crime mapping and predictive modelling have delivered measurable crime reductions and faster case resolutions. |

| Human expertise essential | Technology is a force multiplier, but success still depends on knowledgeable investigators ensuring data is used ethically and accurately. |

| Governance and oversight | Ongoing evaluation and evidence-based adoption are vital to minimise bias, error, and ensure public trust in AI and data-driven policing. |

Understanding modern data analysis in UK law enforcement

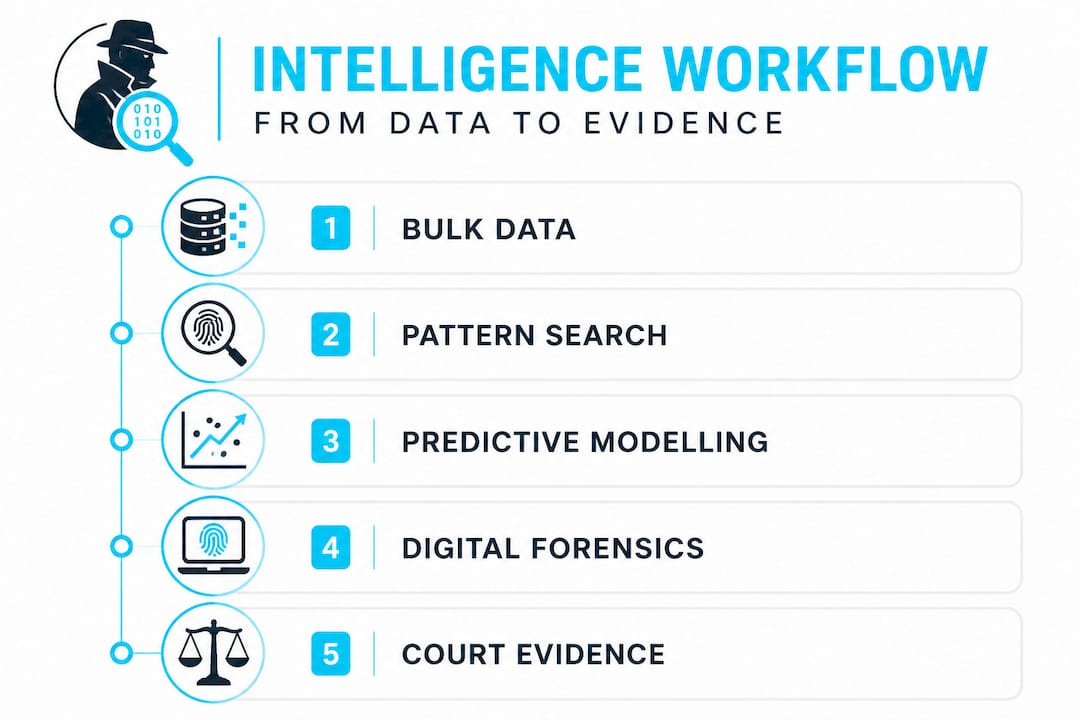

With the importance of data-driven policing now established across major forces, it helps to clarify precisely what these terms mean in practice. “Data analysis in policing” covers a broad spectrum of techniques, from simple trend mapping to sophisticated machine learning. Three concepts matter most for investigators and legal professionals working with resulting evidence.

Bulk data exploitation refers to the systematic processing of enormous datasets, such as telecommunications records, ANPR (Automatic Number Plate Recognition) logs, and financial transactions, to uncover patterns that individual manual review would miss entirely. The National Crime Agency’s National Data Exploitation Capability (NDEC) applies this approach specifically to serious organised crime, combining datasets from multiple agencies to construct activity timelines and network maps at a scale previously impossible.

Predictive modelling takes historical crime data and calculates probability scores for future events, helping commanders deploy resources before incidents occur rather than reacting afterwards. Crime linkage uses algorithmic comparisons of offence characteristics to identify whether separate crimes were committed by the same individual, even when no physical evidence connects them.

Understanding legal forensics best practices is critical because the quality of evidence produced by these methods depends entirely on how data is collected, processed, and documented for court.

Types of data routinely analysed by UK forces include:

- Geospatial data: Precise location coordinates from devices, CCTV, and reported incidents

- Temporal data: Time-of-day, day-of-week, and seasonal patterns in offending

- Modus operandi (MO) data: Method of entry, weapon use, approach strategy, and victim selection

- Communications data: Call records, messaging metadata, and social media activity

- Financial transaction data: Cash withdrawal patterns, unusual transfers, and illicit payment networks

- Biometric data: Facial recognition outputs, fingerprint databases, and DNA profiles

| National initiative | Primary focus | Key data types used |

|---|---|---|

| NCA NDEC | Serious and organised crime | Bulk telecoms, financial, intelligence |

| West Midlands Project Guardian | Violent and knife crime | Geospatial, CCTV, incident logs |

| ViCLAS (Violent Crime Linkage Analysis System) | Serial offender identification | MO, victim profile, crime scene data |

| Project Odyssey | RASSO (Rape and Serious Sexual Offences) | Device extraction, communications |

The role of expert witnesses has grown substantially as this type of evidence reaches court, because judges and juries require clear, accessible explanations of how algorithmic outputs were generated and what they reliably prove.

Real-world impact: How data analysis advances criminal investigations

Once the landscape is clear, it is crucial to see these approaches at work in actual UK investigations. The results are striking and, in several documented cases, directly responsible for disrupting serious offending.

West Midlands Police’s Project Guardian combined geospatial overlays with temporal analysis, identifying violent crime hotspots at specific times and locations rather than treating an entire borough as a uniform risk zone. Officers were then deployed to these precise windows and locations, with measurable results rather than anecdotal impressions. The 16% reduction in knife crime is a significant outcome in a high-volume, high-harm category.

Crime linkage represents a similarly powerful capability. Algorithms using Bayesian probability analysis, regression modelling, and decision tree methods compare crime features across reported sexual offences to identify series that would remain invisible to investigators handling cases individually. ViCLAS applies this specifically to violent and sexual offences, building a national picture that individual force investigation teams simply cannot maintain manually.

| Approach | Traditional method | Data-driven method |

|---|---|---|

| Suspect identification | Witness statements, local knowledge | Cross-referenced digital, geospatial, and MO data |

| Crime series detection | Detective intuition and manual review | Algorithmic linkage across thousands of records |

| Resource deployment | Reactive, based on reported incidents | Predictive, based on modelled risk scores |

| Evidence processing | Sequential review of physical exhibits | Parallel automated extraction and flagging |

| Timeline reconstruction | Interview-led and document review | Device metadata, location data, transaction logs |

Here is how crime linkage algorithms work in sequence within a live investigation:

- Data ingestion: All available crime reports for a defined offence category are entered into the analytical system, including location, time, victim demographics, and MO details.

- Feature extraction: The algorithm isolates comparable variables, such as approach method, victim vulnerability indicators, and geographic range.

- Probabilistic scoring: Each pair of crimes is assigned a linkage probability score using Bayesian or regression models.

- Cluster identification: Crimes above a defined threshold are grouped into potential series for human analyst review.

- Investigative prioritisation: Analysts review clusters, add contextual knowledge, and elevate the most credible series to dedicated investigation teams.

- Evidence submission: Linkage findings, with supporting data, are packaged as admissible intelligence products for court proceedings.

For digital forensic investigation roles supporting these cases, understanding this workflow ensures that device evidence and digital artefacts are presented in a format that complements algorithmic linkage outputs rather than existing in isolation.

Pro Tip: Always cross-reference geospatial data with communications records and financial transaction logs when building a suspect profile. Each individual source may appear inconclusive, but triangulation across three or more data types dramatically increases evidential confidence and reduces the risk of challenge at trial.

Extracting actionable intelligence: From predictive modelling to digital forensics

With practical examples covered, it is important to examine how these techniques actually produce court-ready intelligence. The shift from raw data to usable evidence involves several specialised processes that law enforcement teams and their forensic partners now apply routinely.

Deep coding is a technique developed by the Mayor’s Office for Policing and Crime (MOPAC) in which police records are systematically interrogated for vulnerability indicators buried within routine incident logs. In rape, domestic abuse, and stalking cases, this process extracts patterns from thousands of records that no individual officer would identify by reading case files. The outputs feed predictive vulnerability models that help forces identify victims at elevated risk before repeat offences occur.

Time-slicing forensics represents one of the most significant practical advances in recent years. Project Odyssey demonstrated that targeted device data extraction can be completed in approximately three hours when investigators know precisely what evidence window they need, compared with the months that full-device forensic analysis previously required. In RASSO (Rape and Serious Sexual Offences) cases, this radically changes the investigative timeline and reduces the burden on victims whose devices have historically been retained for extended periods.

“The ability to extract precisely defined data windows from a device in hours rather than months is not just an efficiency improvement. It is a justice improvement, reducing victim re-traumatisation and accelerating court-ready evidence production.”

Following best practice forensics analysis standards during these extractions is non-negotiable, because any procedural error can compromise admissibility regardless of how compelling the underlying evidence appears.

Actionable intelligence types produced through these methods include:

- Identity intelligence: Device ownership, account registration data, and communication metadata establishing who was present or active

- Temporal intelligence: Precise event timelines reconstructed from metadata, GPS logs, and app activity records

- Vulnerability intelligence: Behavioural and circumstantial indicators flagging victims or suspects at elevated risk

- Network intelligence: Communication maps showing relationships between individuals across multiple devices and platforms

- Device trace intelligence: Evidence of data deletion, application use, and location history that survives factory resets

Pro Tip: Securing informed victim consent for digital device extractions at the earliest possible stage dramatically accelerates evidence processing. When consent is documented clearly and scope is defined precisely from the outset, time-slicing tools can be applied immediately, avoiding the legal and procedural delays that extend timelines unnecessarily in sensitive cases.

Understanding how to identify cybercrime evidence is equally important because in complex investigations, digital and physical evidence streams must be managed in parallel rather than sequentially to maintain evidential integrity.

Challenges, risks, and governance in the age of Police.AI

Of course, the promise of data analysis is balanced by significant challenges and risks in real-world implementation. The appetite for AI and data tools in policing has outpaced governance frameworks in some areas, and experienced practitioners know that technology alone does not deliver better outcomes.

The most fundamental risk is data quality. Poor input data corrupts AI outputs in precisely the same way that inaccurate witness testimony undermines a prosecution case. Forces operating with incomplete crime recording, inconsistent MO coding, or siloed databases produce analytical outputs that may be systematically misleading. The phrase “garbage in, garbage out” is technically unsophisticated, but it accurately describes the failure mode of every data-driven policing tool deployed on poor-quality records.

Algorithmic bias is a related but distinct concern. If historical crime data reflects biased enforcement patterns, such as disproportionate stop-and-search in particular communities, a predictive model trained on that data will replicate and potentially amplify those patterns. This is not merely an ethical problem. It is an evidential one, because defence counsel will challenge any algorithm whose training data can be shown to encode systematic discrimination.

“Deploying AI tools without robust, independently evaluated evidence of their effectiveness is not innovation. It is experimentation conducted on communities and victims who deserve better.”

Key risks that governance frameworks must address include:

- Data silos: Incompatible systems across forces preventing effective cross-agency analysis

- Algorithmic opacity: Inability to explain model outputs in terms that satisfy legal scrutiny

- Lack of domain expertise: Analysts who understand statistics but not investigative practice, or investigators who trust outputs they cannot evaluate

- Governance gaps: Absence of regular, independent auditing of AI tool accuracy and fairness

- Over-reliance: Treating algorithmic probability scores as conclusions rather than investigative starting points

Effective digital forensics in corporate investigations demonstrates why the same governance principles matter outside policing: evidence produced without documented methodology and oversight will not withstand scrutiny in any adversarial legal proceeding.

Developing law enforcement training capabilities that address these specific human factors remains a critical gap in many forces’ data adoption strategies.

Why data-driven policing succeeds or fails in the real world

Having worked closely with law enforcement teams and legal professionals on digital evidence cases, we hold a clear view on where the gap between promise and reality in data-driven policing actually sits. It is rarely in the technology.

The forces and investigation teams that achieve consistent, sustainable results from data analysis are those that treat algorithmic outputs as one input among many rather than as authoritative conclusions. They employ analysts who understand both statistics and investigative practice, and they maintain healthy scepticism about any finding that cannot be independently verified through traditional methods. The evidence-based adoption of AI tools is a legitimate and productive ambition, but optimism about precision policing must be matched by equal rigour around data quality, governance, and the resource investment that serious analysis genuinely requires.

The uncomfortable truth is that the “data solves everything” narrative creates real harm. It encourages under-resourcing of experienced investigator capacity on the assumption that algorithms can compensate. They cannot. A predictive model that flags a high-risk postcode cannot conduct a victim-centred interview. A linkage algorithm that identifies a probable series cannot assess whether a suspect’s explanation has the ring of truth. These judgements require human expertise, and pairing data analysis best practices with genuine investigative experience is what produces evidence that holds up under the pressure of cross-examination.

Balancing technical efficiency with human oversight is not a compromise. It is the standard of practice that separates forces producing conviction-ready evidence from those generating investigative activity that stalls before trial.

Pro Tip: When presenting data-derived evidence in legal proceedings, document every step of the analytical methodology, including data sources, cleaning decisions, model parameters, and analyst review stages. A finding that cannot be fully explained in court carries limited evidential weight regardless of how statistically significant it appears.

Bridging data analysis and evidence: Support for your investigations

For professionals looking to put advanced data analysis into practice, support from experienced digital forensics teams can be invaluable. Understanding where digital footprints exist across devices, platforms, and cloud services is the foundation of any evidence strategy that will withstand legal scrutiny. Computer Forensics Lab works directly with law enforcement and legal professionals across the UK, providing expert witness reports, maintaining strict chain of custody, and applying advanced extraction and analysis techniques to cases involving cybercrime, serious violence, and complex financial fraud. Our team combines technical forensic expertise with a clear understanding of what courts require, ensuring that professional forensics services translate directly into conviction-ready evidence packages rather than technical reports that require extensive interpretation.

Frequently asked questions

How does data analysis help police solve crimes more quickly?

By automating pattern recognition and directing resources to verified hotspots, data analysis allows investigators to act on prioritised, high-confidence intelligence rather than working through every lead sequentially. West Midlands Police’s Project Guardian showed that geospatial targeting of violent crime hotspots produces measurable reductions in serious offending faster than traditional patrol-led responses.

What risks come with using AI in law enforcement investigations?

The principal risks are poor input data quality, algorithmic bias from historically skewed records, and insufficient transparency in how outputs are generated. Active governance and human oversight are essential safeguards, not optional additions, when deploying AI tools in evidential contexts.

Can data analysis replace traditional investigative work?

No. Data tools produce the most reliable results when they augment experienced investigator judgement rather than substitute for it. Balancing tech efficiency with oversight remains the defining standard for forces producing evidence that survives adversarial legal scrutiny.

How fast can critical digital evidence now be extracted?

With time-slicing approaches developed under initiatives such as Project Odyssey, targeted device data extraction can be completed in approximately three hours in appropriately scoped RASSO cases, compared with the months that full device analysis previously demanded.